Even that the title suggests this is very specific to the SAFE Network, and how to implement an RDF store on it, this is in my belief a problem that many others could also face when trying to store RDF triples on any type of key-value storage.

I’ll be explaining the problem from the SAFE Network perspective though.

Background

A few months ago we thought that we needed the SAFE Network to have RDF data to be a first-class citizen, and we started researching and doing some PoC implementations of APIs/utilities which allow us and devlopers to store application’s data on the network as RDF data.

Based on the currently available data types on the SAFE Network, the question that I’m trying to bring in here with this post is simple: What's the best way to store mutable RDF triples on a key-value storage?

When I say what’s the best way, I mean, the best way to make it efficient not only for storing them, but also for querying the graphs (queries which may be trying to filter by certain subject or predicate as an example) minimising the network traffic needed for it.

I’ll be trying here to leave out of the discussion how the API for this would look like, just to focus on the topic of serialisation of the RDF docs/graphs to store them on the network. And note it’s important to make this very clear, we want interoperability with other systems, but we need to decide how to serialise the RDF data to store it regardless how the API consumers will send/retrieve the RDF docs.

Data types currently available

In the SAFE Network there are currently two storage data types:

-

ImmutableData: a blob stored at a predictable location which is based on the hash of its contents. -

MutableData: a key-value storage type which allows independent mutations of each key-value pair, as well as fetching a key-value pair by providing a key.

Storing Immutable RDFs

Storing immutable RDF documents is straight forward, since the document can be stored as an ImmutableData object on the network, and the user decides which serialisation format to use, i.e. Turtle, JSON-LD, etc.

Storing Mutable RDFs

When it comes to store mutable RDFs is when we need to think of several options, and where we are trying to get more input/ideas for. Since as I explained above, the only way to have mutable documents on the SAFE Network is by storing them as MutableData objects, which is a key-value storage, at least that’s what it’s currently available.

The SAFE APIs could actually store the data in any non standard RDF serialisation format, as long as the API serialises/deserialises the data when it’s being written/fetched, then from a developers perspective using that API it’s transparent and it’s ok, he/she will be seeing RDF docs going in and coming out.

However, since that’s just one of the APIs, other developers may decide to bypass it and access the data stored on the network using the lower level APIs, in which case they will see data which is not an standard serialised RDF at all. This is why we thought we wanted not just APIs which can help developers with RDF, but we also want the data to be stored using any of the standard serialisation formats (Turtle, JSON-LD, etc.).

But there is one more thing we considered, even storing the data in one of the standard serialisation formats, if I (as a developer) am trying to read/write it using a programming language or framework where I don’t have RDF utilities/libraries available to parse that type of serialisation, I’m then also in the same situation as the scenario above, and I’ll have to write my own parser and utility to read and make queries on it.

This is why we thought that JSON-LD provided an advantage, from this aspect, since we can assume everyone knows how to treat JSON objects and there is a vast amount of libraries and utilities available in all type of programming languages to parse and manage them.

So what we have on the table is:

- A key-value storage for our serialised RDFs

- We want to minimise the network traffic when making queries which may result in only a subset of the graphs contained in a single RDF doc

- We want to store the RDF using any of the standard serialisation formats

- We want developers to be able to parse and read the RDF data even if they don’t have access to any RDF lib/utility/API, just to help in the process of adoption of RDF.

For a separate discussion, on top of all this, we want to expose APIs/utilities which make it easy for developers to manage RDF data, but which also provide interoperability with any other systems, including Solid apps.

For example, by supporting several serialisation formats for import/export RDF data, like in the case of Solid where Turtle is mainly supported it seems.

PoC implementation

At this point it’s probably already apparent the path we took to implement our PoC, so trying to be brief here and to detail a bit of it, this is what we’ve done:

- Since for this PoC we wanted to demonstrate scenarios where we needed to mutate RDF docs, we decided to store them on

MutableDataobjects (this is related to item #1 above). These scenarios are:- Having a CRUD for WebIDs (SAFE WebID Mgr app)

- Publish posts linked to WebIDs with our Patter social app

- We wanted to make sure in the future we are able to fetch only some subgraphs from a single RDF doc from the network, we thought that storing each subgraph as a key-value pair would be beneficial since we can send a request to fetch a subset of graphs to the network (this is related to item #2 above).

- Since the

MutableDataobject is a key-value storage, and we put the subgraph id as the key, then we thought that having the mapped value to be stored as JSON-LD would cover the scenario decribed above in item #4. - We used rdflib.js library since that gave us the caabilities to serialise/deserialise RDF docs in several formats, and gives us also some querying mechanisms we can start playing with. We exposed an RDF API which makes use of this library plus the functionality to store/retrieve the triples to/from the SAFE Network.

We also created some utilities to abstract the CRUD operations for WebIDs, but again, I’m keeping this out of this topic.

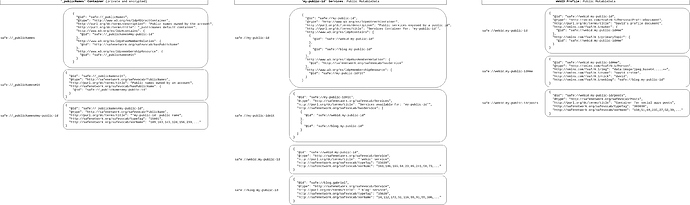

Just as an example, the following image is a snapshot of some RDF data stored on the SAFE Network using our PoC. In this case three MutableData’s used for a WebID profile doc published on a human readable safe:// URL. Each rounded box is an entry in its corresponding MutableData object. The strings on the left of each rounded box are the entries’ keys, and the content of the rounded boxes are the entries’ values.

I hope it’s clear enough to trigger the discussion. Again, the intention here is to share details of what we’ve done, the considerations we took when implementing our PoC, and hopefully get some feedback, ideas, suggestions to consider in what regards to the aspects explained herein.