I am trying to build a global network that I intend to be the seed of a future community network.

My vault is a fork of safe_vault and uses a fork of maidsafe_utilities, but my modifications are minimal and concerns only logging of vault data (because I want to be able to collect some data but I also want my vault to be inter-operable with original safe_vault, so that people are not forced to use my fork).

But I came across several problems:

-

Setting disable_external_reachability_requirement to false doesn’t work.

-

Release mode doesn’t compile.

-

I cannot create an account when the number of vaults is not exactly the min section size.

The first 2 problems are not blocking because I just leave disable_external_reachability_requirement to true and compile in debug mode, but the last one is blocking. I have set min_section_size to 5 and if 5 vaults are running then account creation works but when 6 vaults are running it doesn’t.

I reproduce this problem both when I try to create a manager account or a regular account:

When I try to create the manager account for invitations (./gen_invites --create) with 6 vaults I get this error:

Trying to create an account using given seed from file...

thread 'mainWARN 13:38:38.895597300 Core Event Loop [<unknown> <unknown>:188] Failed to receive response: Timeout

' panicked at 'WARN 13:38:38.896607000 Core Event Loop [<unknown> <unknown>:191] Could not put account to the Network: CoreError(Operation aborted - CoreError::OperationAborted)

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

! unwrap! called on Result::Err !

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

safe_app/examples/gen_invites.rs:186,16 in gen_invites

Err(CoreError(Operation aborted - CoreError::OperationAborted))

', /home/tfa/.cargo/registry/src/github.com-1ecc6299db9ec823/unwrap-1.2.1/src/lib.rs:67:25

note: Run with `RUST_BACKTRACE=1` for a backtrace.

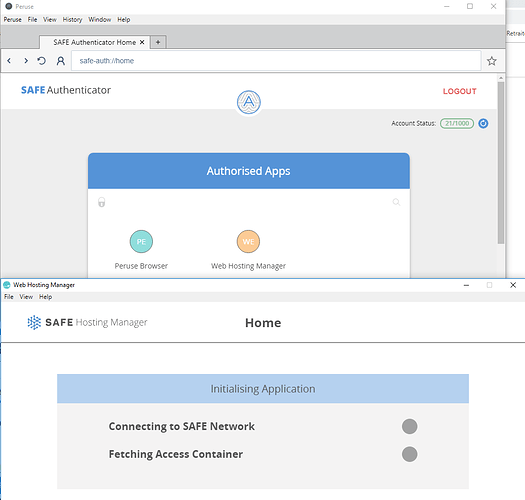

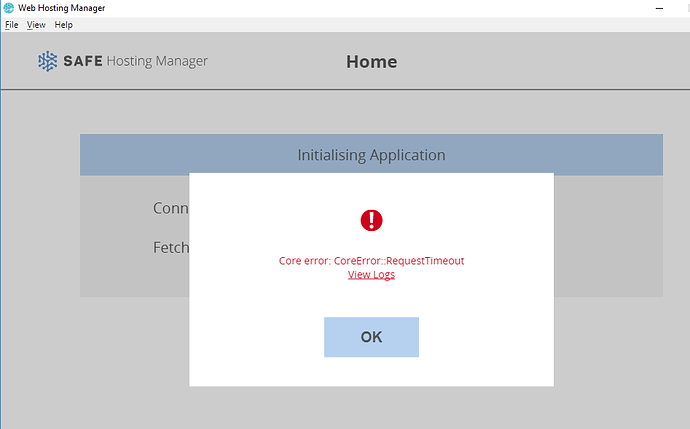

After having created the manager successfully (with 5 vaults), I put back the sixth vault and I try to create a regular account with an invite. I get this error in Peruse browser:

Core error: Blocking operation was cancelled

If I delete the last vault to have 5 vaults again, then I can create an account with an invite (another one because the network considers that the previous invitation is already claimed).

The global Peruse log file (with the failed creation, followed by the successful one) is:

T 18-12-05 15:15:08.293993 [<unknown> <unknown>:49] Creating unregistered client.

T 18-12-05 15:15:08.296993 [crust::main::service service.rs:556] Network name: Some("tfa")

T 18-12-05 15:15:08.327002 [crust::main::service service.rs:82] Event loop started

T 18-12-05 15:15:08.327993 [crust::main::config_refresher config_refresher.rs:44] Entered state ConfigRefresher

T 18-12-05 15:15:08.327993 [<unknown> <unknown>:537] Waiting to get connected to the Network...

T 18-12-05 15:15:09.359988 [crust::main::active_connection active_connection.rs:63] Entered state ActiveConnection: PublicId(name: ce06c7..) -> PublicId(name: 75744a..)

T 18-12-05 15:15:09.359988 [crust::main::active_connection active_connection.rs:110] Connection Map inserted: PublicId(name: 75744a..) -> Some(ConnectionId { active_connection: Some(Token(11)), currently_handshaking: 0 })

D 18-12-05 15:15:09.360978 [routing::states::bootstrapping bootstrapping.rs:266] Bootstrapping(ce06c7..) Received BootstrapConnect from 75744a...

D 18-12-05 15:15:09.360978 [routing::states::bootstrapping bootstrapping.rs:332] Bootstrapping(ce06c7..) Sending BootstrapRequest to 75744a...

D 18-12-05 15:15:09.366978 [routing::states::client client.rs:91] Client(ce06c7..) State changed to client.

T 18-12-05 15:15:09.366978 [<unknown> <unknown>:555] Connected to the Network.

T 18-12-05 15:34:37.964360 [<unknown> <unknown>:124] Attempting to log into an acc using client keys.

T 18-12-05 15:34:37.965361 [crust::main::service service.rs:556] Network name: Some("tfa")

T 18-12-05 15:34:38.011359 [crust::main::service service.rs:82] Event loop started

T 18-12-05 15:34:38.011359 [crust::main::config_refresher config_refresher.rs:44] Entered state ConfigRefresher

T 18-12-05 15:34:38.011359 [<unknown> <unknown>:537] Waiting to get connected to the Network...

T 18-12-05 15:34:39.066345 [crust::main::active_connection active_connection.rs:63] Entered state ActiveConnection: PublicId(name: 9cd623..) -> PublicId(name: 75744a..)

T 18-12-05 15:34:39.066345 [crust::main::active_connection active_connection.rs:110] Connection Map inserted: PublicId(name: 75744a..) -> Some(ConnectionId { active_connection: Some(Token(11)), currently_handshaking: 0 })

D 18-12-05 15:34:39.067346 [routing::states::bootstrapping bootstrapping.rs:266] Bootstrapping(9cd623..) Received BootstrapConnect from 75744a...

D 18-12-05 15:34:39.067346 [routing::states::bootstrapping bootstrapping.rs:332] Bootstrapping(9cd623..) Sending BootstrapRequest to 75744a...

D 18-12-05 15:34:39.073345 [crust::main::active_connection active_connection.rs:140] PublicId(name: 9cd623..) - Failed to read from socket: ZeroByteRead

I 18-12-05 15:34:39.073345 [routing::states::bootstrapping bootstrapping.rs:316] Bootstrapping(9cd623..) Connection failed: The chosen proxy node already has connections to the maximum number of clients allowed per proxy.

T 18-12-05 15:34:39.073345 [crust::main::active_connection active_connection.rs:227] Connection Map removed: PublicId(name: 75744a..) -> None

D 18-12-05 15:34:39.073345 [routing::states::bootstrapping bootstrapping.rs:365] Bootstrapping(9cd623..) Dropping bootstrap node PublicId(name: 75744a..) and retrying.

I 18-12-05 15:34:39.073345 [routing::states::bootstrapping bootstrapping.rs:141] Bootstrapping(9cd623..) Lost connection to proxy PublicId(name: 75744a..).

T 18-12-05 15:34:40.170331 [crust::main::active_connection active_connection.rs:63] Entered state ActiveConnection: PublicId(name: 9cd623..) -> PublicId(name: adaad7..)

T 18-12-05 15:34:40.170331 [crust::main::active_connection active_connection.rs:110] Connection Map inserted: PublicId(name: adaad7..) -> Some(ConnectionId { active_connection: Some(Token(20)), currently_handshaking: 0 })

D 18-12-05 15:34:40.170331 [routing::states::bootstrapping bootstrapping.rs:266] Bootstrapping(9cd623..) Received BootstrapConnect from adaad7...

D 18-12-05 15:34:40.170331 [routing::states::bootstrapping bootstrapping.rs:332] Bootstrapping(9cd623..) Sending BootstrapRequest to adaad7...

D 18-12-05 15:34:40.189330 [routing::states::client client.rs:91] Client(9cd623..) State changed to client.

T 18-12-05 15:34:40.189330 [<unknown> <unknown>:555] Connected to the Network.

Of course running 5 vaults permanently isn’t a workaround because I want the network to grow and when it becomes public I won’t control the number of vaults. So, what should I do to ensure that this kind of error doesn’t happen when the network is live?

I suppose my firewall configuration is correct because the setup with 5 vaults is working, but maybe not, so here are the ports allowed for inbound connections:

- TCP/22 (for ssh)

- TCP/2376, TCP/2377, UDP/4789, UDP/7946, TCP/7946 (for docker, I use it with an overlay network to log data and the internet network for safe exchanges on port 5483)

- TCP/5483, UDP/5484 (for safe vault)