hey @nbaksalyar , can you see if you can reproduce this, thanks !

I have recorded a session with asciinema that demonstrates the problem on a local network, without docker and with crates from Maidsafe exclusively.

Hi @tfa, we were able to reproduce this behaviour and we’re looking into it.

Thanks for the report!

Hi @tfa,

Could you please try one thing: change the routing config file for the client apps/browser to be the same as on the vaults side (i.e. change the dev section in <app name>.routing.config). This should do the trick, because clients use routing too, and min_section_size should be the same on the both sides.

I was using "dev": null in the client routing config file and copying the file from the vault corrects the problem. Thank you very much and sorry for the disturbance.

Cool use of asciicinema!

Just some general things I observed

-

In general (but not always) it’s probably more reliable to to build from the latest tagged version, not from master (although in this case some of the master releases seemed to be the same as the tagged version). eg

git checkout 0.17.2

I realise sometimes master (or some non-tagged commit) is needed though… -

Using

--releaseduring build is going to give an optimized binary and faster performance rather than leaving it out and getting a debug build.

I am sorry again, but I still have some problems.

Now with routing config file modified as you indicated I can create accounts, both a manager account with gen_invites and regular accounts with invitations in Peruse but I can’t get WHM working:

My configuration is the following:

- min section size = 5

- network has 8 nodes

- Peruse version is 0.7.0

- WHM version is 0.5.0

- OS is Windows 10

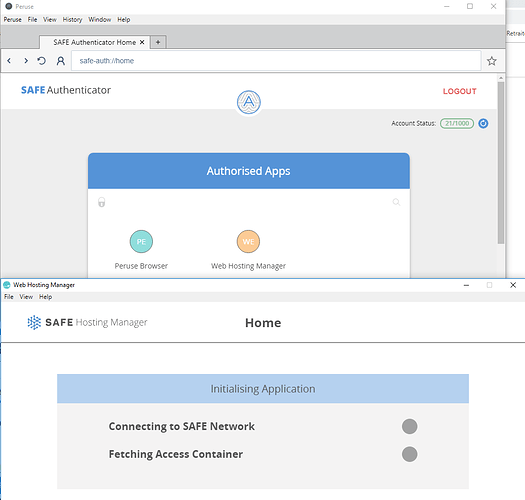

I can authorize both Peruse and WHM but then I am stuck in this screen in WHM:

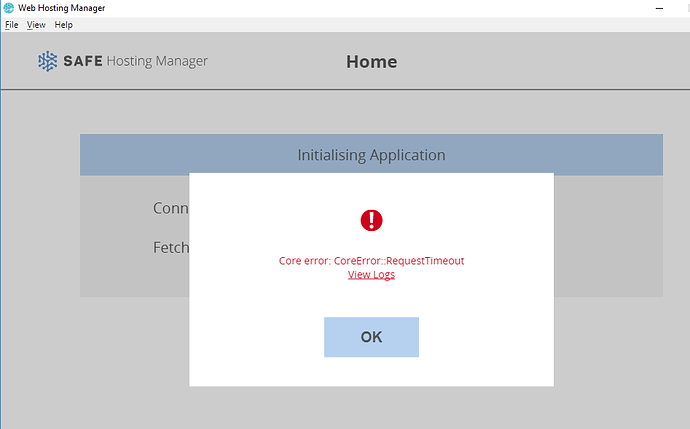

Then there is this final screen:

WHM log is:

T 18-12-16 20:54:32.292830 [<unknown> <unknown>:124] Attempting to log into an acc using client keys.

T 18-12-16 20:54:32.350830 [<unknown> <unknown>:537] Waiting to get connected to the Network...

D 18-12-16 20:54:33.439816 [routing::states::bootstrapping bootstrapping.rs:266] Bootstrapping(ef1dd8..) Received BootstrapConnect from d219e3...

D 18-12-16 20:54:33.439816 [routing::states::bootstrapping bootstrapping.rs:332] Bootstrapping(ef1dd8..) Sending BootstrapRequest to d219e3...

I 18-12-16 20:54:33.458815 [routing::states::bootstrapping bootstrapping.rs:316] Bootstrapping(ef1dd8..) Connection failed: The chosen proxy node already has connections to the maximum number of clients allowed per proxy.

D 18-12-16 20:54:33.458815 [routing::states::bootstrapping bootstrapping.rs:365] Bootstrapping(ef1dd8..) Dropping bootstrap node PublicId(name: d219e3..) and retrying.

I 18-12-16 20:54:33.459816 [routing::states::bootstrapping bootstrapping.rs:141] Bootstrapping(ef1dd8..) Lost connection to proxy PublicId(name: d219e3..).

D 18-12-16 20:54:34.560800 [routing::states::bootstrapping bootstrapping.rs:266] Bootstrapping(ef1dd8..) Received BootstrapConnect from 8147e5...

D 18-12-16 20:54:34.560800 [routing::states::bootstrapping bootstrapping.rs:332] Bootstrapping(ef1dd8..) Sending BootstrapRequest to 8147e5...

I 18-12-16 20:54:34.637801 [routing::states::bootstrapping bootstrapping.rs:316] Bootstrapping(ef1dd8..) Connection failed: The chosen proxy node already has connections to the maximum number of clients allowed per proxy.

D 18-12-16 20:54:34.636800 [crust::main::active_connection active_connection.rs:140] PublicId(name: ef1dd8..) - Failed to read from socket: ZeroByteRead

D 18-12-16 20:54:34.637801 [routing::states::bootstrapping bootstrapping.rs:365] Bootstrapping(ef1dd8..) Dropping bootstrap node PublicId(name: 8147e5..) and retrying.

I 18-12-16 20:54:34.637801 [routing::states::bootstrapping bootstrapping.rs:141] Bootstrapping(ef1dd8..) Lost connection to proxy PublicId(name: 8147e5..).

D 18-12-16 20:54:35.905782 [routing::states::bootstrapping bootstrapping.rs:266] Bootstrapping(ef1dd8..) Received BootstrapConnect from 56305b...

D 18-12-16 20:54:35.905782 [routing::states::bootstrapping bootstrapping.rs:332] Bootstrapping(ef1dd8..) Sending BootstrapRequest to 56305b...

D 18-12-16 20:54:36.023781 [crust::main::active_connection active_connection.rs:140] PublicId(name: ef1dd8..) - Failed to read from socket: ZeroByteRead

I 18-12-16 20:54:36.023781 [routing::states::bootstrapping bootstrapping.rs:316] Bootstrapping(ef1dd8..) Connection failed: The chosen proxy node already has connections to the maximum number of clients allowed per proxy.

D 18-12-16 20:54:36.023781 [routing::states::bootstrapping bootstrapping.rs:365] Bootstrapping(ef1dd8..) Dropping bootstrap node PublicId(name: 56305b..) and retrying.

I 18-12-16 20:54:36.024781 [routing::states::bootstrapping bootstrapping.rs:141] Bootstrapping(ef1dd8..) Lost connection to proxy PublicId(name: 56305b..).

D 18-12-16 20:54:37.289764 [routing::states::bootstrapping bootstrapping.rs:266] Bootstrapping(ef1dd8..) Received BootstrapConnect from 1211bf...

D 18-12-16 20:54:37.289764 [routing::states::bootstrapping bootstrapping.rs:332] Bootstrapping(ef1dd8..) Sending BootstrapRequest to 1211bf...

D 18-12-16 20:54:37.420763 [routing::states::client client.rs:91] Client(ef1dd8..) State changed to client.

T 18-12-16 20:54:37.420763 [<unknown> <unknown>:555] Connected to the Network.

T 18-12-16 20:54:37.423763 [<unknown> <unknown>:304] GetMDataValue for e5c028..

D 18-12-16 20:54:38.026309 [routing::states::client client.rs:209] Client(ef1dd8..) NotEnoughSignatures

D 18-12-16 20:54:38.274653 [routing::states::client client.rs:209] Client(ef1dd8..) NotEnoughSignatures

T 18-12-16 20:54:57.435422 [routing::states::client client.rs:397] Client(ef1dd8..) Timed out waiting for Ack(791f..): UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 1, timer_token: 4, expires_at: Some(Instant { t: 230712746786 }) }

T 18-12-16 20:55:17.435885 [routing::states::client client.rs:397] Client(ef1dd8..) Timed out waiting for Ack(791f..): UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 2, timer_token: 5, expires_at: Some(Instant { t: 230712746786 }) }

T 18-12-16 20:55:37.436635 [routing::states::client client.rs:397] Client(ef1dd8..) Timed out waiting for Ack(791f..): UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 3, timer_token: 6, expires_at: Some(Instant { t: 230712746786 }) }

T 18-12-16 20:55:57.438302 [routing::states::client client.rs:397] Client(ef1dd8..) Timed out waiting for Ack(791f..): UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 4, timer_token: 7, expires_at: Some(Instant { t: 230712746786 }) }

T 18-12-16 20:56:17.438839 [routing::states::client client.rs:397] Client(ef1dd8..) Timed out waiting for Ack(791f..): UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 5, timer_token: 8, expires_at: Some(Instant { t: 230712746786 }) }

T 18-12-16 20:56:37.453844 [routing::states::client client.rs:397] Client(ef1dd8..) Timed out waiting for Ack(791f..): UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 6, timer_token: 9, expires_at: Some(Instant { t: 230712746786 }) }

T 18-12-16 20:56:57.454602 [routing::states::client client.rs:397] Client(ef1dd8..) Timed out waiting for Ack(791f..): UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 7, timer_token: 10, expires_at: Some(Instant { t: 230712746786 }) }

T 18-12-16 20:57:17.461707 [routing::states::client client.rs:397] Client(ef1dd8..) Timed out waiting for Ack(791f..): UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 8, timer_token: 11, expires_at: Some(Instant { t: 230712746786 }) }

D 18-12-16 20:57:17.461707 [routing::states::client client.rs:406] Client(ef1dd8..) Message unable to be acknowledged - giving up. UnacknowledgedMessage { routing_msg: RoutingMessage { src: Client { client_name: ef1dd8.., proxy_node_name: 1211bf.. }, dst: NaeManager(name: e5c028..), content: UserMessagePart { 1/1, priority: 3, cacheable: false, fcb3a8.. } }, route: 8, timer_token: 11, expires_at: Some(Instant { t: 230712746786 }) }

D 18-12-16 20:57:37.425656 [<unknown> <unknown>:35] **ERRNO: -17** CoreError(Request has timed out - CoreError::RequestTimeout)

This could be caused by the config files not being picked up for some reason. I’ll try to reproduce the same steps and will get back to you with the results soon.

And thanks for confirming that the routing config change worked for you!

I have the same problem with min_section_size=8 and a network of 8 nodes.

But if I put back “dev”: null in peruse config file then WHM works again.

So I seem to be in an inextricable situation because I needed to set “dev” to the same value as of a vault config file to correct the earlier problem of accounts that couldn’t be created in a network with 6 vaults and min_section_size=5.

I want to try if I get the same problem with 9 vaults and min_section_section=8 but I don’t succeed in getting the ninth vaults starting. I will investigate this later.

This is interesting, I was going to chime in on this thread and it seems I didn’t. I’m having some of the same issues, I have considered scaling the network up to 16-20 nodes to see if the issues continue.

On a related note, re: “config files not being picked up”

Which file does the browser officially use? When I download the latest release (yesterday at least) it looks like:

/peruse

/peruse.crust.config

/resources/Peruse.crust.config

(I don’t recall which seem to work, I generally add the files in both folders)

I update them to look like:

/persue

/peruse.crust.config

/peruse.routing.config

/resources/Peruse.crust.config

/resources/Peruse.routing.config

I assume one of these is correct as the vault routing files follow the same format (in particular, appname.crust.config and appname.routing.config) but since the release doesn’t appear to have a default routing file, is there a chance that it is looking for another name?

So, finally this wasn’t a good idea. The only configuration that works for me:

- leave

"dev": nullin routing config files for clients (gen_invites and Peruse) - use

"min_section_size": 8for the network

Under these conditions I can create accounts and use WHM with a network having strictly more nodes than min_section_size (though I tested only up to 10 nodes because VPS begin to be costly to pass the resource proof)

To be precise:

- 6 nodes with min section size 5 don’t work

- 5 nodes with min section size 5 work

- 8, 9 or 10 nodes with min section size 8 work.

Edit:

I also tested 8 nodes with min section size 7 and they don’t work either (same symptoms: cannot create account or WHM not working depending on content of routing config file). This time I did the test with newly released deliveries (SAFE Browser v0.11.0 and WHM v0.5.1).

The only workable configuration seems to be min section size 8. The problem with that value is a too expensive resource proof. There is a big threshold between min section size 7 and 8:

- a 5 €/month VPS is enough to pass it with min section size 7

- a 40 €/month VPS is needed to pass it with min section size 8

As I didn’t succeed in doing it, my question is: is there a way to get "min_section_size":7 working?

If this works, it’d actually point to the config files getting mucked over likely as @nbaksalyar mentioned. For this permutation, you could essentially leave min_section_size out for vaults as well.

Main issue here is when the vaults and clients min_section_size do not match, then accumulation of messages goes up for a toss and thereby the issues of timeouts/NotEnoughSignatures and so on as the vaults would consider a message accumulated while the clients do not see it the same way. Also network startup conditions would interfere here with minimal nodes thus giving you some strange output specific to number of nodes in the network with accumulation thresholds)

leaving config file override as null/not-present or setting explicitly min_section_size:8 is net the same thing as 8 is the default min_section_size when there is no override provided. routing::MIN_SECTION_SIZE. If you alter the default there and have this recompiled for both clients and vaults, then you’d not be relying on a config override at all but it’d be a pretty cumbersome and annoying process I’d imagine and would rather opt to find out why the config files arent detected.

Some of these electron apps package two sets of config files one for the main app binary and one for the Electron Helper/AppName Helper.*.config too. I’d assume thats where either in the browser(authentication related) or WHM itself, a config override isnt getting applied as expected by the config_file_handler crate when it ends up looking for a config override. In mobile I recall another set_additional_search_paths API was also utilised to provide an excess lookup path for config/log configs, I’m assuming those arent used in desktop (@Krishna /@bochaco can hopefully confirm if we do anything but the default lookups hopefully).

If you try find . -type f -iname "*config" in the browser package folder after an initial run it should show you all the config files in the package (browser for example should show the Helper binary ones too).

Can you maybe provide the binary packages you’re using for both WHM and the browser and we can try it locally to see if the overrides are getting applied cos I’m assuming cos of these crappy binary names/multiple occurrences of config files, something is being a miss somewhere.

Don’t woz about passing binaries. Could reproduce it with the latest release binaries itself. Browser 0.11.0 and WHM 0.5.1

Routing config override I used:

{

"dev": {

"allow_multiple_lan_nodes": true,

"disable_client_rate_limiter": true,

"disable_resource_proof": true,

"min_section_size": 5

}

}

min_section_size is what we’re interested in, the rest dont really impact clients, they were just needed to keep the config schema intact ofc.

so such a config I had to provide to both the browser and WHM.

Browser (relative path from .app pkg): ./SAFE Browser.app/Contents/Frameworks/SAFE Browser Helper.app/Contents/MacOS/SAFE Browser Helper.routing.config

WHM (relative path from .app pkg): ./web-hosting-manager.app/Contents/Frameworks/web-hosting-manager Helper.app/Contents/MacOS/web-hosting-manager Helper.routing.config

Note paths might not match exact on other platforms and especially with these electron “helper” binaries, the names of config files becomes a nightmare to monitor and maintain. One approach I generally take is strip the package/folder of any config files(move them to preserve originals) and then give the apps a dry run and scan with a find command(find . -type f -iname "*config") to see where and what the default empty config files get created as and then just modify them accordingly. Even this isn’t a great approach as with the browser, you’d see it create an empty crust and routing config but for other apps such as WHM only the crust config gets created by default since they use a BootstrapConfig provided by the auth via a diff ctor and while they can be overridden, they dont seem to create an empty routing config, but still just with the default crust config, I could get the expected config file name and path and just created the file myself too.

That was it, providing the expected config override, then sorts the invalid accumulation issue out as clients are using the same expected grp_size as vaults accordingly.

Side notes from just testing this right now:

- We really need a better config management process. Mucking about with the filenames and scanning for presence of these files is a pain made worse when needing to edit/work with local networks. Gets more convoluted with these bundled in signed packages/other lookup/override methods.

- Client grp_size expectation can just get provided via bootstrap response and removed from the overrides all-together. Clients can then choose to have self limits for sigs and either pick a diff proxy/not accordingly as per prev discussions. Just noting this as Its just something that wasn’t implemented at A2 stage.

- Release packages needs better resource bundling. Currently browser package has around 4 crust config files with various names in multiple locations thereby needing other means to identify the valid config file. Should really be pruning dev config files/… before release packaging.

- Should probably log at the various libraries config overrides to indicate the successful overrides when applied. This doesn’t seem to be part of std lib logs at selected thresholds of log.toml provided.

- QA check scope needs to include dev config options too as non happy path testing seems a bit off. I’m assuming such dev options arent tested as rigorously as mock/prod setups.

cc @StephenC @Krishna @ustulation ^^

I raised the following issue for this, although on Linux I only see two crust.config files in the browser package and one of them could be removed: OSX browser package has around 4 crust config files with various names in multiple locations · Issue #497 · maidsafe/sn_browser · GitHub

I also gave it a try to run a local network (currently trying also with min_section_size 5 and running 6 nodes on the same PC), using the latest from master of safe_vault repo. It seems to work fine although I see that some operations take a few minutes (or several seconds at least) until they are completed, e.g.:

- when launching each of the nodes, it takes a few minutes before I see the log message that a new node has joined the network, this happened to me with every node I launched so far.

- when the browser is launched, untill it’s able to connect to the local network it also takes a few minutes

- creating the account is also stuck for a couple of minutes until is finally created

- I was also able to get the WHM v0.5.1 to connect to the local network using the same

routing.config, but it also was stuck after receiving the auth response for a moment before it finally connected to the network. - I was able to create a public id and upload a website, but it wasn’t that fast as I expected either, it was taking several seconds for some of the files it was uploading.

- having a webapp to connect to the network also took a couple of minutes after it received the auth response

Does anyone know why I’m experiencing these delays?

Are you also seeing these delays when interacting wth the local network?

I’m not seeing my CPU or memory being stressed at all when these delays are happening.

Not really, maybe you’re running debug versions?

not something I observed as a common occurrence locally. I have seen spikes for activity but not consistent delays like what you mention.

I’m indeed running debug versions, I’ll try with building for release tomorrow and see what happens, thanks @Viv

I’m seeing the same delays with the release builds, no difference really, at least with launching vaults.

When launching each vault I see the first two minutes pass after the following log entry before anything else is logged:

T 18-12-27 10:50:34.579878 send method=Get, url="http://192.168.1.254/RootDevice.xml", client=Client { redirect_policy: FollowAll, read_timeout: None, write_timeout: None, proxy: None }

vault log of first two minutes

Running safe_vault v0.18.0

==========================

T 18-12-27 10:50:34.573006 Network name: Some("bochaco")

T 18-12-27 10:50:34.579878 send method=Get, url="http://192.168.1.254/RootDevice.xml", client=Client { redirect_policy: FollowAll, read_timeout: None, write_timeout: None, proxy: None }

T 18-12-27 10:50:34.579962 host="192.168.1.254"

T 18-12-27 10:50:34.580001 port=80

D 18-12-27 10:50:34.580063 http scheme

T 18-12-27 10:52:45.737913 registering with poller

T 18-12-27 10:52:45.738008 registering with poller

T 18-12-27 10:52:45.738138 Event loop started

T 18-12-27 10:52:45.738227 wakeup thread: sleep_until_tick=18446744073709551615; now_tick=0

T 18-12-27 10:52:45.738294 Entered state ConfigRefresher

T 18-12-27 10:52:45.738310 sleeping; tick_ms=100; now_tick=0; blocking sleep

T 18-12-27 10:52:45.738385 setting timeout; delay=30.000512674s; tick=300; current-tick=0

T 18-12-27 10:52:45.738442 advancing the wakeup time; target=300; curr=18446744073709551615

T 18-12-27 10:52:45.738466 unparking wakeup thread

T 18-12-27 10:52:45.738515 inserted timeout; slot=44; token=Token(0)

T 18-12-27 10:52:45.738522 wakeup thread: sleep_until_tick=300; now_tick=0

T 18-12-27 10:52:45.738571 sleeping; tick_ms=100; now_tick=0; sleep_until_tick=300; duration=30000

T 18-12-27 10:52:45.738885 registering with poller

D 18-12-27 10:52:45.739693 Node(c7a3a9..()) State changed to node.

And I can see that when launching another vault the same type of delay and log is seen more than once before the vault finally joins the network:

another vault when trying to join also shows 2 mins delays in between some log entries

...

T 18-12-27 10:55:28.581990 registering with poller

T 18-12-27 10:55:28.582023 unlinking timeout; slot=210; token=Token(2)

T 18-12-27 10:55:28.582047 setting timeout; delay=21.052219323s; tick=211; current-tick=11

T 18-12-27 10:55:28.582063 inserted timeout; slot=211; token=Token(2)

T 18-12-27 10:55:28.582155 Graceful event loop exit.

T 18-12-27 10:55:28.582475 Network name: Some("bochaco")

T 18-12-27 10:55:29.587475 send method=Get, url="http://192.168.1.254/RootDevice.xml", client=Client { redirect_policy: FollowAll, read_timeout: None, write_timeout: None, proxy: None }

T 18-12-27 10:55:29.587550 host="192.168.1.254"

T 18-12-27 10:55:29.587591 port=80

D 18-12-27 10:55:29.587622 http scheme

T 18-12-27 10:57:40.649921 registering with poller

T 18-12-27 10:57:40.650024 registering with poller

T 18-12-27 10:57:40.650283 Event loop started

T 18-12-27 10:57:40.650285 wakeup thread: sleep_until_tick=18446744073709551615; now_tick=0

T 18-12-27 10:57:40.650423 sleeping; tick_ms=100; now_tick=0; blocking sleep

T 18-12-27 10:57:40.650590 Entered state ConfigRefresher

T 18-12-27 10:57:40.650779 setting timeout; delay=30.000875289s; tick=300; current-tick=0

... (more log entries here)

T 18-12-27 10:57:41.663378 setting timeout; delay=121.013483612s; tick=1210; current-tick=11

T 18-12-27 10:57:41.663397 inserted timeout; slot=186; token=Token(1)

T 18-12-27 10:57:41.664149 Node(55b35d..()) Got routing message RoutingMessage { src: Section(name: 55b35d..), dst: Client { client_name: 55b35d.., proxy_node_name: c7a3a9.. }, content: NodeApproval { {VersionedPrefix { prefix: Prefix(), version: 0 }: {PublicId(name: 55b35d..), PublicId(name: c7a3a9..)}} } }.

I 18-12-27 10:57:41.664284 Node(55b35d..()) Resource proof challenges completed. This node has been approved to join the network!

T 18-12-27 10:57:41.664338 Node(55b35d..()) Node approval completed. Prefixes: {Prefix()}

T 18-12-27 10:57:41.664207 registering with poller

T 18-12-27 10:57:41.664440 unlinking timeout; slot=210; token=Token(2)

T 18-12-27 10:57:41.664460 setting timeout; delay=21.014565346s; tick=210; current-tick=11

T 18-12-27 10:57:41.664480 inserted timeout; slot=210; token=Token(2)

T 18-12-27 10:57:41.664520 unlinking timeout; slot=186; token=Token(1)

T 18-12-27 10:57:41.664535 setting timeout; delay=121.014640592s; tick=1210; current-tick=11

T 18-12-27 10:57:41.664555 inserted timeout; slot=186; token=Token(1)

I 18-12-27 10:57:41.664735 --------------------------------------------

I 18-12-27 10:57:41.664757 | Node(55b35d..()) - Routing Table size: 1 |

I 18-12-27 10:57:41.664780 | Exact network size: 2 |

I 18-12-27 10:57:41.664802 --------------------------------------------

T 18-12-27 10:57:41.664826 Node(55b35d..()) Scheduling a SectionUpdate for 30 seconds from now.

T 18-12-27 10:57:43.656207 wakeup thread: sleep_until_tick=30; now_tick=30

T 18-12-27 10:57:43.656303 setting readiness from wakeup thread

T 18-12-27 10:57:43.656373 wakeup thread: sleep_until_tick=18446744073709551615; now_tick=30

T 18-12-27 10:57:43.656420 tick_to; target_tick=30; current_tick=11

T 18-12-27 10:57:43.656458 sleeping; tick_ms=100; now_tick=30; blocking sleep

T 18-12-27 10:57:43.656488 ticking; curr=Token(18446744073709551615)

Another thing I’m seeing in the 5th vault I launched is that it was retrying to join at least more than once, I see the following logged after ~15mins that I launched it and still cannot join:

...

T 18-12-27 11:28:54.258668 ticking; curr=Token(18446744073709551615)

T 18-12-27 11:28:54.258684 unsetting readiness

T 18-12-27 11:28:54.258700 advancing the wakeup time; target=3610; curr=3901

T 18-12-27 11:28:54.258720 unparking wakeup thread

T 18-12-27 11:28:54.258746 wakeup thread: sleep_until_tick=3610; now_tick=3601

T 18-12-27 11:28:54.258782 sleeping; tick_ms=100; now_tick=3601; sleep_until_tick=3610; duration=900

I 18-12-27 11:28:55.128755 JoiningNode(4edb33..()) Failed to get relocated name from the network, so restarting.

D 18-12-27 11:28:55.128817 State::JoiningNode(4edb33..()) Terminating state machine

W 18-12-27 11:28:55.128844 Restarting Vault

T 18-12-27 11:28:55.131012 Graceful event loop exit.

T 18-12-27 11:28:55.131675 Network name: Some("bochaco")

T 18-12-27 11:28:55.134810 send method=Get, url="http://192.168.1.254/RootDevice.xml", client=Client { redirect_policy: FollowAll, read_timeout: None, write_timeout: None, proxy: None }

T 18-12-27 11:28:55.134849 host="192.168.1.254"

T 18-12-27 11:28:55.134859 port=80

D 18-12-27 11:28:55.134875 http scheme

T 18-12-27 11:31:05.641679 registering with poller

T 18-12-27 11:31:05.641747 registering with poller

T 18-12-27 11:31:05.641861 Event loop started

...

I’m not sure why that last one cannot join but the jumps in the timetamps of the logs seem to all be due to waiting a response to that even trying to get "http://192.168.1.254/RootDevice.xml" ?

I’ll share the logs of this run with the vault team.

Ok, thanks a lot @Viv, just to confirm here that the problem I was having when experiencing those delays are definitely related to the LAN I was using with my home WiFi (something going on when I use that router), as soon as I switched to my cellphone hotspot LAN the problem was completely gone and I’m able to run 5 vaults and also 6 vaults, which join the network quickly, and everything else is also quick, i.e. creating an account, uploading a couple of webapps with the WHM, and using those webapps from the browser generating some data as well.

This is what I’m using for the vaults, browser and WHM as routing config:

{

"dev": {

"allow_multiple_lan_nodes": true,

"disable_client_rate_limiter": true,

"disable_resource_proof": true,

"min_section_size": 5

}

}

I will try to also run a network with more vaults as well as with min_section_size 8 as it was mentioned above to see if I encounter any issues.

EDIT: I can confirm that it works for me also with min_section_size 8, with 8, 9, and even 10 vaults, this is the crust config I’m using:

{

"hard_coded_contacts": [],

"tcp_acceptor_port": null,

"force_acceptor_port_in_ext_ep": false,

"service_discovery_port": null,

"bootstrap_cache_name": null,

"whitelisted_node_ips": null,

"whitelisted_client_ips": null,

"network_name": "bochaco",

"dev": null

}

bump

purely so this topic does not disappear after 2 months - much useful info in here